|

LLMs are good search helpers. Here’s three search tools I use every day. All of these use an AI to synthesize answers but also provide an essential feature: specific web search results for you to verify and further research. I use these for conversational inquiries in addition to more traditional keyword searches. Phind is an excellent free LLM + search engine. The AI writes an answer to your query but is very careful to provide footnotes to a web-search-like list of links on the right. I use this mostly for directed search queries, things like “what’s an inexpensive TV streaming device?” where I might have used keyword search too. The Llama-70b LLM that powers the free version is quite good, sometimes I have general conversations with it or ask it to generate code. Bing CoPilot has a very similar output result to Phind. I find it a little less useful and the search result links are less prominent. But it’s a good second opinion. Bing has been a very good search engine for 10+ years, I’m grateful to Microsoft for continuing to invest in it. CoPilot results are sometimes volunteered on the main Bing page but you often have to click to get to the ChatGPT 4 Turbo enhanced pages. Kagi is what I use as my general search engine, my Google replacement. It mostly gives traditional keyword search results but sometimes it will volunteer a “Quick Answer” where Claude 3 Haiku synthesizes an answer with references. You can also request one. I think Phind and CoPilot do a better job but I appreciate when Kagi intercepts a keyword search I did and just gives me the right answer. Google has tried various versions of LLM-enhancement in search, I think the current version is called AI Overviews. It’s not bad but it’s also not as good as the others. Not mentioned here: ChatGPT or Claude. Those are general purpose LLMs but they don’t really give search results or specific references. In the old days they’d make up URLs if you asked but that’s improving.

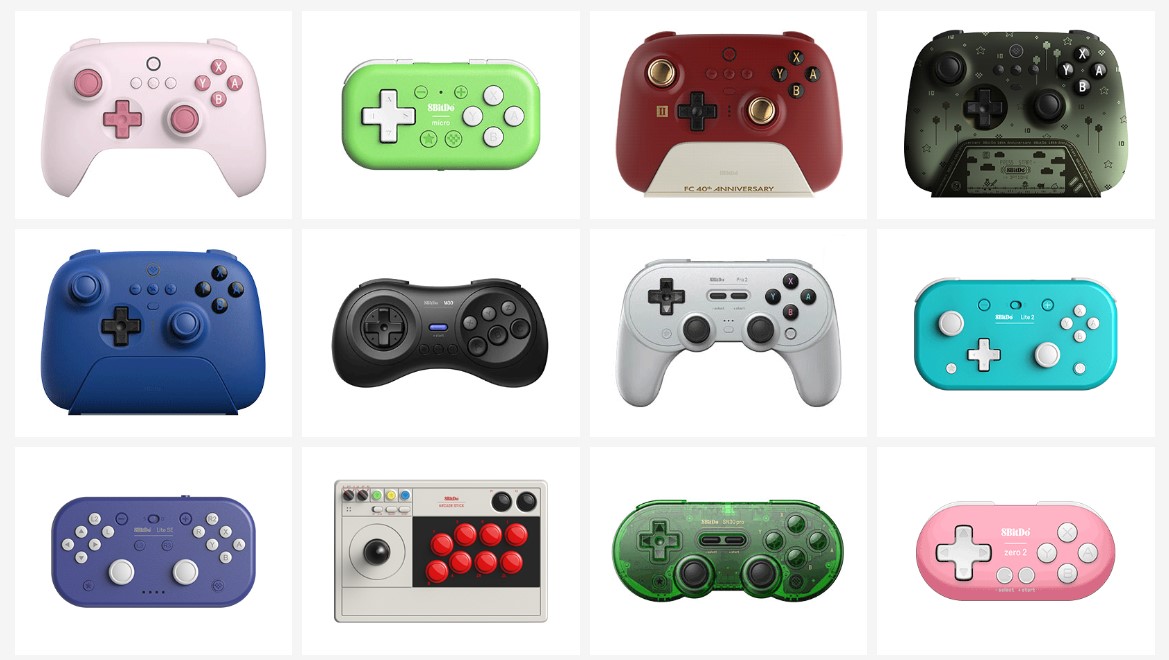

8BitDo makes good game controllers. A

wide variety of styles from retro to mainstream, with some unusual shapes.

And wide compatibility with various systems: PC, Macs, Switch, Android. They’re well

built, work right, and quite inexpensive. A far cry from the MadCatz-style

junk we used to get.

The new hotness is the Ultimate 2C, an Xbox-style wireless controller for the very low price of $30. But it works great, doesn’t feel cheap at all. The fancier mainstream choice is the Ultimate 2.4g at $50 which includes a charging stand and extra reprogrammability.  But what’s really interesting to me are the odd layouts, often small or retro. The SN30 Pro is particularly interesting as a portable controller. SNES-styling but a full XBox style modern controller with two analog sticks, easy to throw in a suitcase. There’s a lot of fiddly details for this class of device. Controller type (XInput, DInput, switch, etc), wireless interface (Bluetooth or proprietary), etc. 8BitDo makes good choices and implementations for all that stuff I’ve tested. They seem to work well with Steam. They’re a popular brand so well tested. It helps that PC game controllers have mostly standardized around the Xbox layout and XInput. Steam can patch over any rough spots for older games.

PreSonus makes good computer speakers. They’re marketed as “reference monitors” but at $100 for a small set I have my doubts about their referenceness. Fortunately I have a tin ear and they sound just fine for my computer playing YouTube videos, compressed music, games. Wirecutter agrees. The specific ones I have are these 3.5" Bluetooth speakers for $150. Inputs are RCA line-in, balanced, and Bluetooth, also an Aux-In and Headphone jacks on the front. Decent amplifier, plenty loud for an office. There’s 100Hz and 10kHz equalization knobs and a Bluetooth pairing button in the back. The “Gen 2” version includes an optional standby mode for power savings which seems to work fine. The cabinet is MDF and while it’s light it doesn’t have that hollow sound of cheap plastic. The website only promises 80 Hz so these are not the speakers for bass thumping. Fifteen years ago I recommended the M-Audio speakers. IIRC their quality went downhill, maybe they went to plastic enclosures? I also had some Creative Mackie speakers but they have a manufacturing problem that causes them to fail after a few years. We’ll see how long these PreSonus ones last. I’m self-conscious how this post looks like a spammy marketing affiliate page written by an AI. It’s not! I just like the product. Phanpy is good software for reading Mastodon or other Fediverse posts. Astonishingly it’s an open source passion project from a single developer, Chee Aun. Its quality is extraordinary, better than most commercial social media software. There’s so many good things about Phanpy that it’s hard to know where to start. It has several innovations for reading social media. My favorite is the Boost Carousel, a way to collapse the ordinary spammy boosts / retweets so they don’t overwhelm original posts. There’s also the catch-up UI, a novel approach to the problem of helping you read the last 12+ hours of posts quickly. Mostly I like Phanpy because it’s just very high quality. All the little things work so well, like the post UI and the image display and the notifications. The account switcher is great too. So many software products are full of rough edges and bugs and annoyances. Phanpy is immaculate. It’s easy to get started. Phanpy runs as a PWA so there’s not even really an install, you just visit the website, approve the login from your main Mastodon host, and you’re up and running. I use it that way in my browser on desktop but have it installed as a formal PWA on my phone. Works great, including notifications. Honestly surprised a product of this quality is an open source project. AFAICT Chee Aun has worked full time on it for at least a year and he is very good at what he does. He has a modest request for sponsors but I hope somehow his work ends up paying him very well or compensates him in some other way. Google search is overwhelmed with spam these days. Back in January I switched to Kagi and have been happy with it. It’s not free but there’s a limited trial to check it out. I pay $10/mo for unlimited access. Turns out I do about 50 searches a day. I’m unclear on how Kagi works or why it’s better than Google. It seems to be returning more quality results and less SEO-churn old-but-look-new pages. I see some AI-padded content on the results at times but mostly better stuff. I assume under the hood it’s mostly Bing. Whatever they’re doing works for me, a bit of a surprise since the similar DuckDuckGo has never succeeded for me. Kagi is ad-free. It has some interesting advanced features but I don’t use them often. Honestly most of my queries are navigational. Kagi does have a new sidebar LLM feature where it generates a synthetic answer with references, much like Bing, sometimes I find that useful. My biggest annoyance is Kagi’s local and maps search is nowhere near as good as Google. It’s Apple Maps; their cartography is good these days but they don’t have the local search data with user reviews. Also Kagi doesn’t work in incognito mode because I’m not logged in. They have a workaround for it but then you lose anonymity. I have a feeling I’m going to be changing search engines several times in the next few years. It’s a shame Neeva didn’t make it, I feel like now is the best time ever for serious search competition. I’m grateful Bing is still viable. And maybe Google will finally get its act together.

The underlying data model is its genius. Backups are stored in a repository, some complex hash-index blob store that I don’t understand at all. But it seems able to quickly store blocks of data and de-duplicate them so incremental backups are efficient. It’s encrypted and the blobs in the repository are stored in a simple filesystem. That makes it easy and safe to backup to all sorts of places including untrusted remote stores. I’m doing remote backups to BackBlaze’s S3-like filesystem for about $1/month. The repo format means you need a working copy of restic to restore your files. I’m OK with that, it’s open source. And the tool is great. It has options for bulk restore, individual file restore, interactive restore via a FUSE filesystem. Also a check command you can use to verify subsets of the backup on your own schedule. The basic command line tool is good but limited. I’m using resticprofile as a frontend. You set up a single config file and it takes care of running restic for you, even scheduling itself in cron. It’s a bit idiosyncratic but seems to work fine once set up. backrest is another frontend, I haven’t tried it. Shout out to rsnapshot, I’ve been backing up with it for 18 years now. Time for something new. rsnapshot is pretty slow on lots of little files and remote backups were awkward. Years ago I said 5 minutes to do an incremental backup of 165GB was good; that takes more like 5 seconds in Restic now. Proxmox is good software for a home datacenter. It’s an OS you install on server hardware that lets you easily run multiple virtual machines and LXC containers. It also manages disk storage and has some more complex support for high availability in a cluster, distributed storage via Ceph, etc. But even with a single small server running a single VM Proxmox offers advantages.  I’ve had a Linux server in my home for 20+ years now. Every few years I have to rebuild it, often from the ashes of failed hardware, and it’s always a tedious manual process. Now my server is truly virtualized, a nice tidy KVM/QEMU virtual machine with a disk I can snapshot and back up. And migrate an exact copy to new hardware in minutes. Right now I’m mostly running my stuff in one big VM under Proxmox that I migrated from the old server. But I’m slowly moving services to separate VMs and LXC containers. So now my SMB server for Sonos lives in one container, and my Plex server in another, and my Unifi router manager in a third. All running isolated from each other. This feels tidier, more manageable. Proxmox does a lot of nice things for home-scale servers. It handles ZFS for filesystems, including snapshots and backups. It has a nice web GUI for managing things, even graphical consoles where needed. And I like how it supports both VMs and containers as a first class things. There’s other ways to manage guest systems, like Docker (containers only) or VMware ESXi (proprietary, VMs only). Proxmox feels the right scale for me. I’ve spent about a month tinkering with it and like the software quite a bit. It’s usable, well documented, and seems well designed. Obsidian is good software for

taking and organizing notes. There are many apps for this task, Obsidian

is my current favorite. In the past I’ve used a text file, SimpleNote,

Standard Notes, Joplin. I never used emacs The core Obsidian data model is “a folder of markdown files”. That’s it, really basic, and the files are easily usable as ordinary files. There’s natural support for links between notes. There’s also a metadata option I don’t use. I appreciate it’s easy to move files in and out of Obsidian. But where Obsidian really shines is the plugin ecosystem. I don’t actually use many plugins, just HTML export and system tray. But I appreciate the power. If you check the reddit you’ll find an enthusiast community that does a lot more complicated stuff, turning their Obsidian archives into 1000+ article infobases. Me, I just write grocery lists and blog posts. Obsidian is not open source. They’re thoughtful about why not. (Logseq is a popular open source alternative). The core product is free and works great. I am paying $96 per year for syncing. It’s pricy but it works well and I want to support the company. You can do your own free sync but none work as easily. I want to give a shout-out here to Simplenote, an excellent and venerable free product. And after a brief lull development started again in 2020. Kudos to Matt and Automattic for supporting that tool. I like Obsidian’s fanciness but Simplenote is pretty great. Recently I switched to a new calorie counting app, Cronometer. I’m quite happy with it. It’s a huge improvement over MyFitnessPal (MFP) or Lose It and is not exploitative like Noom. The key improvement with Cronometer is accuracy, particularly good data sources for nutrition information. MFP offered obviously wrong entries from random people, sapping my confidence. Also it’s quicker to log things from a trusted database. And the app works well. Cronometer’s UI is modern and easy to use. It doesn’t display extra distractions. MFP’s insistence on scolding me about things I don’t care about was a bummer. The data sync is fast. And they have a good data export, something MFP won’t do. I have some minor complaints. Cronometer is very excited to track macros and every single obscure nutrient (threonine, selenium?!). I really only want to track calories. Fortunately the other things don’t take up too much space. They also display ridiculous calorie precision in the diary. But that feels like a rare UI mistake, not a general design ethos. The free version is pretty complete. The $55/year paid plan adds a bunch of stuff, the one I care about is dividing your diary up into individual meals. I have a long history with food diaries, more off than on. Having a good app that I trust and is easy to use is important. That’s the post. What are passkeys? I don’t have answers, just questions. I believe passkeys are a great idea but the tech world is doing a terrible job explaining them. Someone really needs to explain how passkeys work in Internet products. Existing descriptions aren’t sinking in, as evidenced by the confusion online. For instance this Hacker News discussion where a new Passkey product announcement is met with a bunch of basic questions about what Passkeys even are. Update: see these newer Passkey overview articles

here

and here.

Also my

own

notes written after this was published.

The tech is pretty well defined: Passkeys are a password replacement that uses WebAuthn to log you in to stuff. Companies are widely deploying them now: Apple, Google, Microsoft, 1Password. Passkeys are an industry consensus and are arriving in production very soon or already has. Great! Now then what are they really? Here’s some questions from my perspective as an ordinary if expert Internet user. I own a few computers and phones and don’t want to trust just one company with my entire digital identity.

The core of many of these questions is exactly what a passkey is. What I want to read is an article that explains the gestalt of passkeys and identity on the Internet in a way the answers to all these questions becomes clear. My understanding from what I’ve read is that passkeys are an authentication token, basically a replacement for a single secret like a password. Naively that’d mean I’d need a different passkey for every website I log in to (just like I need different passwords). But I could be wrong. Or maybe the passkey intention is that we use federated logins, so sites like my Mastodon server use Google to help me log in with my Google passkey? (That’s an enormous business problem, if so.) My other understanding is a lot of my questions don’t have good answers yet. Ie: revocation of a passkey or migrating to new devices. The product announcements from various companies say “trust us, that’s coming soon”. But I do not trust a company like Google or Apple to later add a feature that will make it easy for me to migrate away from their loving embrace. That stuff has to be defined and working before Passkeys are a good product for consumers and the Internet. Update: Ensuing discussion has made one thing clear:

you don't share passkeys between sites. You have a separate passkey

for each thing you log in to. That clears up several of my questions.

I don't know how I didn't understand that already but the confusion

isn't mine alone.

There really needs to be a good, clear description of Passkey as a product so questions like this aren’t being asked over and over again. I’m hopeful the folks working on this stuff understand the answers and just haven’t communicated it well. |

||