|

Since my one

day map hack a few months ago I've been doing more work with open source geographic software.

There's a lot to learn and I've yet to find a good simple introduction,

so here's some notes on resources and how they fit together.

Libraries. There's a lot of core math and data structures for GIS. GEOS implements the basics of OpenGIS geometry: data types, simple calculations, etc. GDAL is the library + utilities for working with raster georeferenced data (maps). GDAL also includes OGR for working with vector data (shapefiles). Finally Proj is the library for translating data from one spatial reference system (SRID) to another. GEOS + GDAL + Proj give you most of the core library functions you need for working with geographic data. They're C libraries and fast but have bindings for more humane languages as well. Data formats. GeoTIFF is a common format for raster data. There's a zillion others GDAL supports, but GeoTIFF is the native format. Esri Shapefile is a common format for vector data, but you also see a lot of GPX, KML, GeoJSON, etc. Database. PostGIS is the most common OSgeo database. It adds a bunch of datatypes and functions to Postgres. There are also geo extensions for other databases like MySQL and Oracle. Often a relational database is unnecessary: files + GDAL go a long way. Web development. There are lots of options for using geographic data in a web application. GeoDjango is a great choice for building a webapp. MapServer and MapNik are two options for rendering custom maps on a web server. I really like Polymaps for doing map display and compositing in Javascript. Desktop applications. Not everyone wants to write code. QGis is a good desktop application for manipulating geographic data. There's also GRASS, OpenEV, and uDig. These programs are focused on displaying and editing geographic datasets, for making and updating custom maps by hand. Datasets. OpenStreetMap is a huge resource for free data for road maps. geodata.gov is a portal for US government datasets where with enough digging you can find interesting data. There's a lot of other data scattered about, I haven't found a good catalog. Community. OSGeo and OpenStreetMap are two centers of the open source geo hacking world. slashgeo is a good news site, as is the GIS forum. GIS StackExchange is looking promising. I�m still new to all this so if I misstated or overlooked something please email me.

I wanted to give a thank you to Lyndon Asuncion, propietor of the "Some bits of Art" blog. He'd registered somebits.wordpress.com awhile back but wasn't using it and very kindly gave me the name when I asked. I'm planning on moving this blog over there soon and this was an important first step.

Some bits of Art is a nice work blog, btw, he's a video game artist in Seattle. I like his work on this house.

The Gawker blog network got

hacked by some pissed off

hackers who released a dump of the emails and encrypted passwords of

1.2M Gawker users. Cracking a

password database like this is pretty easy (see

Duo Security), I�ve seen 180,000 cracked already. Top 5: 123456, password,

12345678, lifehack, qwerty.

Poor Gawker, they�re screwed, right? No, we�re all screwed. People frequently use the same password on multiple sites. Now Twitter is awash in acai berry spam thanks to shared Gawker passwords. This kind of database theft happens all the time, the only difference with Gawker is the stolen goods were released publically. You can laugh at the people who use weak passwords, but do you really want to remember a random string like 5Bfw7Gvil4Eg to comment on the latest Valleywag gossip? You can laugh at the people who share passwords at multiple sites, but seriously, who�s got the time to manage 300+ different strong passwords? How do we end passwords? OpenID for logins to most sites. Two factor authentication to secure important passwords. This stuff works right now: I applaud the StackExchange sites for launching using only OpenID login. And I applaud Twitter for shipping OAuth: folks who posted to Gawker via their Twitter identities weren�t compromised. Passwords are an inhumane form of account security. They are bad user design. It is time to stop using passwords for most sites.

The thing that impressed me most looking at Google's CR-48 netbook was that the CapsLock key was turned into a search key. That makes perfect sense! I'd been using a modified version of WinUrl for years to search Google from the keyboard, but Win-W is awkward. So why not CapsLock?

I'll tell you why not: because rebinding CapsLock on Windows is a royal PITA. Fortunately AutoHotKey has it all figured out. So here's an AutoHotKey script to search Google with whatever's in your clipboard. It's smart enough to open full URLs directly, too. AutoHotKey is a bit complicated to configure, but the basic idea is to paste my script into the default script it creates.

Google just launched the Chrome Web Store where

users buy and install "web apps" in Chrome. But all the examples

I tried were just bookmarks to ordinary web pages. So what's an

app?�It's explained better here and�there's a full

article here.

Hosted apps are�basically fancy bookmarks. A manifest file specifies a name, an icon, etc. It also has support for elevated permissions (accessing the user's location, for instance) and an auto-update mechanism. It only takes 15 minutes to turn any web page into a hosted app. It's a sensible Netbook feature, allowing a fancy icon that says "Email" to click on instead of a generic bookmark. Packaged apps are more complex, more like browser extensions in that the code is installed locally and has extra APIs into the browser. But where extensions are for modifying pages (say, by removing ads), packaged apps have their own UI for a specific task. Like a Twitter client or a game. Packaged apps can run entirely offline. Once an app is built it can be uploaded to the Chrome Web Store: there's a good tutorial on the process. There's some minor hoops to jump through but no mention of a required review before going live. The store is an interesting new marketing channel but I'm skeptical that for-pay apps will thrive. We're at a transitional moment for web apps: distinctions between web sites and local applications are being blurred by HTML 5's application caching capability and APIs like�local storage. If I were building a web app now I'd build it entirely in generic HTML 5 that works in any browser but uses all the fancy new HTML 5 stuff to make it work like a locally installed application. Then make it a Chrome hosted app to take advantage of the Web Store marketing channel. I'd avoid the extension / packaged app route unless there's some technical capability I really need that's missing in HTML 5.

I've been following the WikiLeaks story with some interest. The hysteria

surrounding the cable leaks is downright un-American.

The most disturbing thing is what looks to be a coordinated campaign to deny WikiLeaks access to American Internet companies. Amazon dropped them. PayPal dropped them. EveryDNS dropped them. Tableau dropped them. All of these companies have the right to choose whom they do business with. But freedom of the press requires a press. It's particularly troubling to think that government pressure is behind the shutdowns. Also disturbing is Interpol chasing Assange over a minor Swedish criminal charge. I don't want to dismiss the rights of the accusers in Sweden, but the timing and publicity make it obvious the international warrant has more to do with revenge than sexual impropriety. Now the story is "WikiLeaks = Rapist", a smear which discredits the cable releases without addressing their content. And then you get the crazy shit. A sustained DDoS attack against the WikiLeaks site which does nothing to stop the cable release but does make it harder for the organization to explain what they're doing. And a herd of nutjobs saying Assange should be assassinated. The air is poisoned. What makes the response seem like hysteria is the actual cable leak doesn't seem that big a deal. WikiLeaks is being responsible about redaction. The source material, while private, was hardly ever kept that secure. The cables were stored globally on SIPRNet where thousands to millions of people had access. Anything shared with thousands of people is not, practically speaking, secret. And the information coming out so far has proven to hardly be blockbuster. It has been interesting, though, and embarassing. Clearly the cable leak is against the US government's interests and of course the government is going to respond. But the response is looking a little crazy and disproportionate, not to mention ineffective. In the meantime news organizations every day are turning the material into fascinating stories about the inner workings of US relations with North Korea, China, Italy, Russia, Afghanistan. In the end the disclosure may well create more value than harm caused.

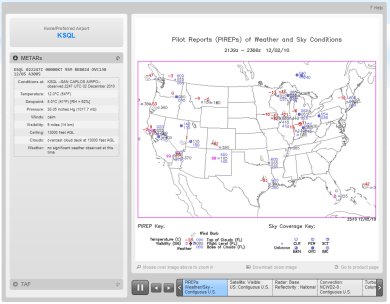

The new weather.aero site is

a great example of government web design done right. Check it out! It's

an experimental version of the venerable aviation weather site ADDS, a joint production of

NWS, NSF, NOAA, and FAA. The new design is great: simple and clean, with

underlying web services to allow mashups with the data. What the kids

these days call "Web 2.0".

My favourite part of the design is how uncluttered it is. The home page contains the key products: a text METAR for home airport weather and a slideshow of weather map graphics showing national aviation conditions and forecasts. The slideshow UI is attractive with a tidy mouseover zoom. Drilling down a layer there's good, consistent page UI for different weather views like winds, radar images, and forecasts. There's nothing revolutionary about the UI but it's very cleanly executed and looks like 2010 instead of the previous 1998esque product. Usable simplicity achieved. In addition to the web page views there's a very interesting web service that lets you easily get XML or CSV data for weather observations, pilot reports, etc. This data has been available for integration before (for instance, via ftp) but this new web service is nicely centralized with thoughtful REST query options like "all METARs along this flight path".The site has modern community outreach, too. The team is on Twitter, Facebook, and has a Nabble-hosted forum. And it's not all faceless bureaucrats, you can even see and learn more about the developers. The US government produces all sorts of amazing software and data. But it's a culture apart from the Internet startup world and, to be honest, has often produced crappy websites. The new ADDS is a different thing entirely, a very nice bit of work. I'm excited to see a new generation of technology produced by government agencies.

Update: I misattributed who built the

experimental ADDS site. The site was built by

NCAR, with funding

and support from NWS, NSF, NOAA, and FAA. NCAR itself is not a

government agency, but rather an R&D center that receives a lot

of federal funding. It's

foundation story is

interesting, a 1960 solution to the same sort of culture gap

I mentioned above.

File this in the rumour bin: a leak of Blizzard's product roadmap, with some analysis here. It may or may not be true, but it looks plausible. But the big news is the last item, Titan, releasing Q4 2013. Could this be Blizzard's long-rumoured secret new MMO? We know they've been hiring for it, we know many of the original WoW developers were pulled off of Warcraft right after the Burning Crusade lanuch to work on something secret and new. But that's all we know. But now we have a name. Project Titan. I've found a possible confirmation: Google delving turns up a guy named theNoid who claims to have an inside source at Blizzard. He's been talking about "Project Titan" at Blizzard for the last 18 months.

I also found another mention of the "Project Titan" name in September 2010 from a different source, but no idea if he's independent:

Taken separately, both the leaked product roadmap and the forum posts from "theNoid" are plausible but not particularly trustable. But the fact these two independent rumours confirm each other is quite interesting. The name "Titan" for a Blizzard MMO is rich irony, btw, since Titan was also the name of the cancelled Halo MMO. Particularly if Blizzard's new thing ends up being an MMOFPS, as is rumoured. Who knows, maybe someone worked a deal and it's actually some of the same people? It seems very unlikely Blizzard would release a game based on the Halo IP. |

||