|

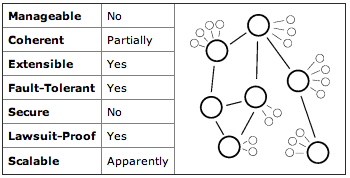

Ten years ago I wrote a pair of articles for O'Reilly on distributed systems topologies: Part 1 and Part 2. I wrote them because a lot of people were confused about what "peer to peer" meant in system design and so I tried to classify various options for how computers could talk to each other to make a single system. The article holds up pretty well, even if a lot of the analysis seems embarrassingly naïve. We don't sweat the topological details so much anymore but I think there's an echo of these themes in the discussion of how various NoSQL data stores manage data availability and consistency.

About once a year I get an email from someone who wants to start a company selling CPU time from idle computers, typically by running a screensaver or background job on public computers. Back in 2000 I co-founded Popular Power, a company to do just that, which failed about 15 months later. Here's what I tell the people who write me looking for advice.

The primary challenge is finding customers. It is difficult to convince any company to risk sending their software and data out to untrusted third parties. There are no good technical measures for protecting intellectual property on untrusted computers. In addition, scavenged cycles are an awkward mode of computation and potential customers have to port their code before they can even try it out. A company's primary value proposition is price, but you're competing with the ever-decreasing cost of computer time. And the market is mostly $200,000+ enterprise sales. It always takes 12–18 months to close a contract at that scale, that's a long time wait for a cash-poor startup. A couple of things have changed in the past ten years for the better. Cloud computing is now mainstream, there's no need to explain the concept. And distributed computing has improved with paradigms like MapReduce. Computers have gotten faster, although that doesn't specifically favor scavenged computing. Unfortunately bandwidth has not gotten significantly cheaper so data transfer is still a barrier. Fate has not been kind to this market in the last ten years: see my 2007 retrospective. Since then United Devices merged with Univa and is still operating, although presumably never worth anything like the $45M in venture investment it raised. Data Synapse got bought by TIBC for a rumored $28M, more than zero but not the $200m+ value of a real success. I'm glad people keep trying to make this business work, but it's a tough road. In the meantime free projects like BOINC are having some success providing low cost computation for public research projects. And of course the botnets are owning the Internet.

I had awful service from Virgin America recently. A flight cancellation, a software system disaster, and many mistakes from agents on the ground all worked together to make a truly awful travel day. I like what Virgin is trying to do, but until they get their act together after their disastrous switch to a new reservation systems I suggest avoiding them.

On Nov 2 Ken and I had Main Cabin Select tickets from JFK to SFO. That morning I called and paid extra for first class upgrades (score!). Then two hours before departure, the flight was cancelled. The scene at JFK was chaos because Virgin has just that week changed its online reservation system and it wasn't working. The staff didn't know how to use it, the system didn't work, customers were sitting on the floor waiting for things to get straightened out. Rebooking us was beyond the JFK staff's ability to cope. The nice woman at the desk kept saying "we have this flight, do you want it?" and then spending ten minutes trying to book it, only to find some other agent had grabbed the seats while she was typing. She finally rebooked us on a different airline. Only they booked it wrong, twice, and I spent 90 stressful minutes going between terminals three times trying to get the flight booked correctly. I will say the agent we talked to was pleasant and professional. She just was unequipped to make their own software work. And then they were so stressed and rushed they kept screwing up the booking on a different airline. Poor service is de rigeur for airlines but Virgin America claims to be something different. Sadly it wasn't last month.

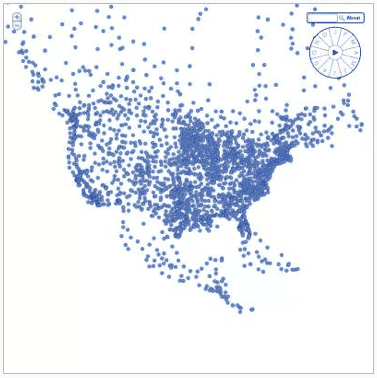

This is interesting: Google's preview for my Wind History project shows they executed quite a lot of Javascript and SVG code.

Related, a few weeks back Matt Cuts tweeted that Googlebot is executing some Javascript when indexing. (See this analysis and my earlier comments) It's worth noting that the Googlebot indexer is almost certainly an entirely different system from the preview image generator. I suspect the index building crawl still runs very little Javascript code and almost certainly doesn't render pixels; speed is important for freshness. (A remaining puzzle: why the image tiles from Open Street Map aren't in that preview. They're also loaded in Javascript. They may have loaded too slowly, or maybe the preview generator doesn't load content from external domains?) |

||